What Is Binomial Distribution?

Binomial distribution is a discrete probability distribution that models the number of successes in a fixed number of independent trials, where each trial produces exactly one of two outcomes and the probability of success stays the same throughout. Named after the binomial theorem — which Jacob Bernoulli formalized in Ars Conjectandi (1713) — it became the foundation of modern probability theory.

The key word is counting. You are not asking "what is the probability of one coin landing heads?" You are asking "what is the probability of getting exactly 7 heads in 10 tosses?" That counting question is precisely what binomial distribution answers.

Binomial distribution is a discrete probability distribution that models the number of successes in a fixed number of independent trials, where each trial has exactly two outcomes and the probability of success remains constant. It is defined by two parameters: n (number of trials) and p (probability of success per trial).

🔑 Key Takeaways

The most important points to understand before working with binomial distribution.

Binomial distribution counts successes, not probabilities of single events. It answers "how many times?" over repeated trials.

Four conditions must all be met. If any one condition fails, the binomial model does not apply.

The distribution is discrete. X can only equal 0, 1, 2, ..., n — never a fraction.

Mean = np; variance = np(1−p). These two formulas describe the center and spread without needing the full PMF.

Bernoulli distribution is binomial with n = 1. Every Bernoulli trial is the atomic unit of a binomial experiment.

Sampling without replacement violates the independence condition. Use the hypergeometric distribution in that case instead.

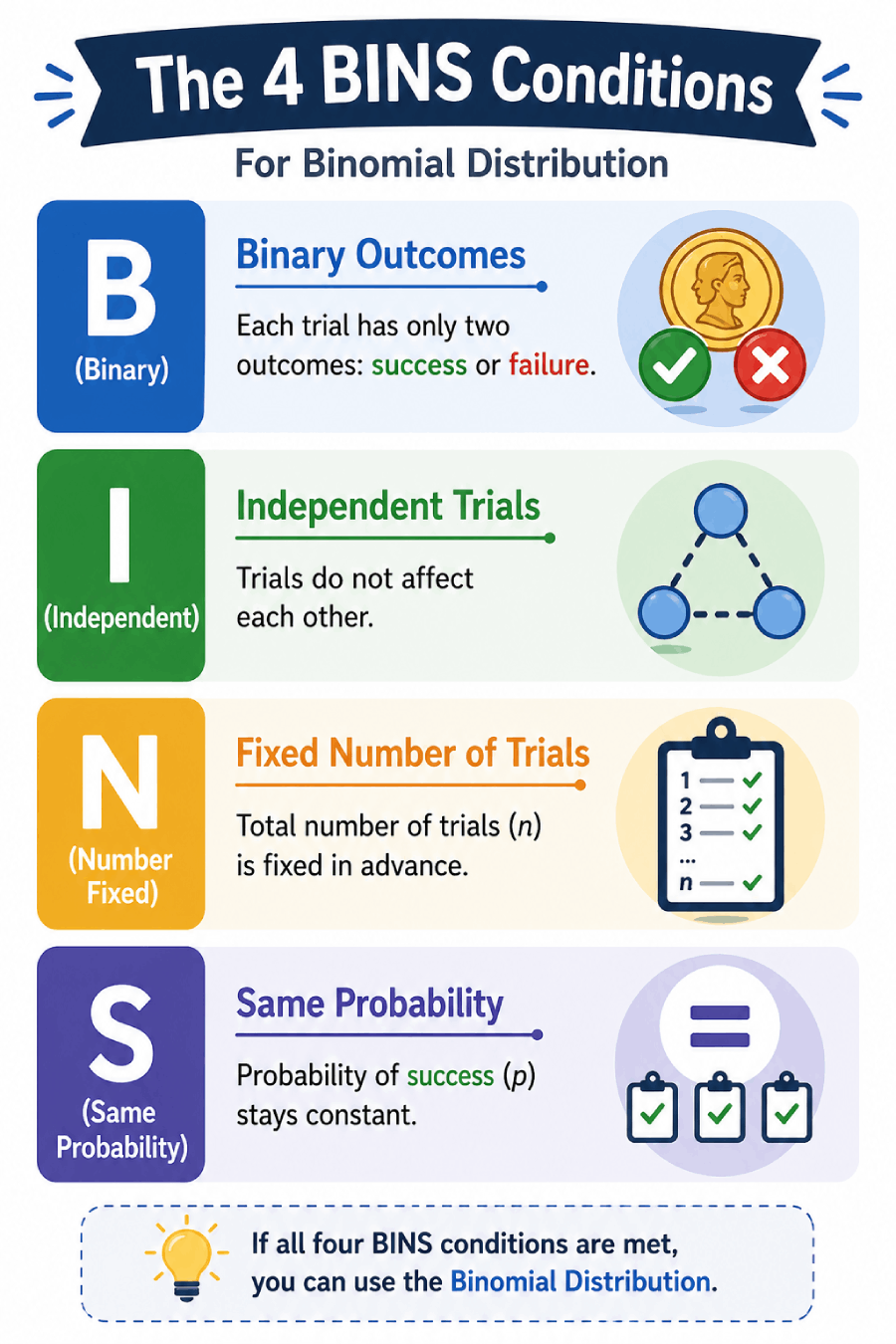

The 4 Conditions for Binomial Distribution

The BINS conditions: Binary outcomes, Independent trials, fixed Number of trials, and Same probability of success.

Before applying the binomial model, check all four conditions. A single violated condition means the model will give wrong answers — no matter how carefully you apply the formula afterward.

Fixed Number of Trials

The number of trials n is set in advance and does not change. You flip a coin exactly 20 times, not "until you get 5 heads."

Independent Trials

The outcome of one trial does not affect any other. Drawing cards with replacement is independent; drawing without replacement is not.

Binary Outcomes Only

Each trial ends in exactly one of two outcomes: success or failure. Everything that is not "success" counts as "failure."

Constant Probability

The probability of success p is identical on every trial. A fair coin always has p = 0.5, trial after trial.

Memory Aid: The BINS Acronym

Binary outcomes

Each trial has exactly two outcomes: success or failure.

Independent trials

Trials do not influence each other in any way.

Number of trials is fixed

n is determined before the experiment begins.

Same probability each trial

p remains constant across all n trials.

Sampling 10 students from a class of 25 without replacement? The probability of "success" changes after each draw — that is the hypergeometric distribution. Counting the number of customer calls per hour with no fixed n? That is the Poisson distribution.

Binomial Distribution Formula (PMF)

The probability mass function (PMF) gives the probability of observing exactly k successes in n trials. This is the formula you will use for most direct calculations.

n = number of trials

k = number of successes

p = P(success on one trial)

1−p = P(failure on one trial)

C(n,k) = number of ways to arrange k successes

Each of the three parts does distinct work. The binomial coefficient C(n,k) counts every possible arrangement of k successes among n positions. The term pk gives the probability those k trials are all successes. And (1−p)n−k gives the probability the other n−k trials are all failures. Multiply them together because all three must happen simultaneously.

Cumulative Distribution Function (CDF)

The CDF answers "what is the probability of at most k successes?" by summing the PMF from 0 to k:

Use the PMF when you need the probability of exactly k successes. Use the CDF when you need the probability of k successes or fewer. For "at least k" probabilities, compute 1 − P(X ≤ k−1).

Mean, Variance & Standard Deviation

You do not need the full PMF to describe a binomial distribution. Three summary statistics — derived directly from n and p — capture its center and spread.

If you flip a fair coin 100 times, the mean is 100 × 0.5 = 50 heads. The variance is 100 × 0.5 × 0.5 = 25, and the standard deviation is 5. On a typical run, you would land within one standard deviation of the mean — between 45 and 55 heads — about 68% of the time.

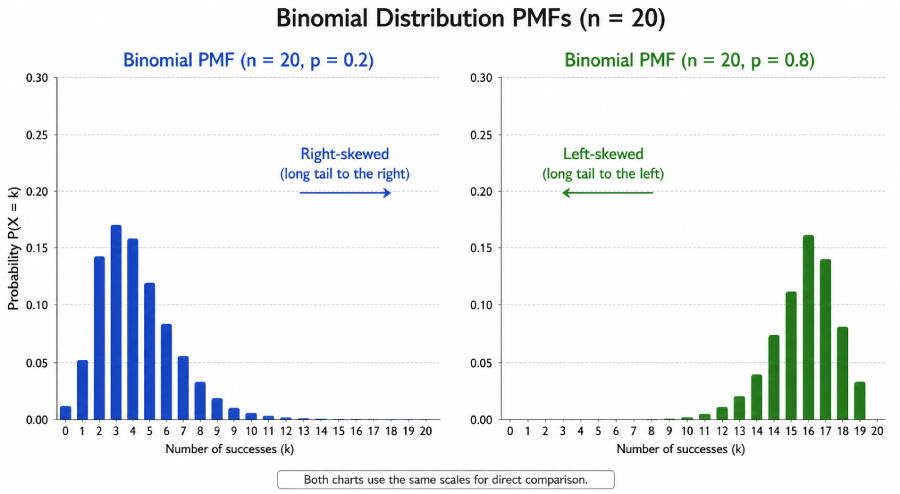

Skewness = (1−2p) / √(np(1−p)). When p = 0.5 the distribution is perfectly symmetric. When p < 0.5 it skews right; when p > 0.5 it skews left. Kurtosis = (1−6p(1−p)) / (np(1−p)) + 3.

How to Calculate Binomial Probability: Step by Step

Calculating binomial probabilities follows a repeatable six-step process. Work through each step in order and the formula becomes mechanical — no guesswork required.

A fair coin is flipped 8 times. What is the probability of getting exactly 3 heads?

Identify parameters: n = 8 (total flips), k = 3 (heads wanted), p = 0.5 (fair coin), q = 1 − 0.5 = 0.5

Compute C(8,3): 8! / (3! × 5!) = (8 × 7 × 6) / (3 × 2 × 1) = 336 / 6 = 56

Compute pk: 0.53 = 0.125

Compute (1−p)n−k: 0.55 = 0.03125

Multiply: P(X = 3) = 56 × 0.125 × 0.03125 = 56 × 0.00390625 = 0.2188

Answer: There is a 21.9% probability of getting exactly 3 heads in 8 coin flips.

A drug trial shows a 70% success rate. If 10 patients receive the drug, what is the probability that exactly 7 respond?

Identify parameters: n = 10, k = 7, p = 0.70, q = 0.30. Check BINS conditions: fixed n ✓, independent patients ✓, binary outcome ✓, constant p ✓.

Compute C(10,7): 10! / (7! × 3!) = (10 × 9 × 8) / 6 = 720 / 6 = 120

Compute p7: 0.707 = 0.0823543

Compute (0.30)3: 0.027

Multiply: P(X = 7) = 120 × 0.0823543 × 0.027 ≈ 120 × 0.002223 ≈ 0.2668

Answer: There is approximately a 26.7% probability that exactly 7 of the 10 patients respond. Mean = 10 × 0.70 = 7 patients (the most likely outcome). Variance = 10 × 0.70 × 0.30 = 2.1.

A factory line has a 5% defect rate. In a batch of 20 items, what is the probability that at most 2 items are defective?

Identify parameters: n = 20, p = 0.05, q = 0.95. We need P(X ≤ 2) = P(X=0) + P(X=1) + P(X=2).

P(X=0): C(20,0) × 0.050 × 0.9520 = 1 × 1 × 0.3585 = 0.3585

P(X=1): C(20,1) × 0.051 × 0.9519 = 20 × 0.05 × 0.3774 = 0.3774

P(X=2): C(20,2) × 0.052 × 0.9518 = 190 × 0.0025 × 0.3972 = 0.1887

Sum all three: P(X ≤ 2) = 0.3585 + 0.3774 + 0.1887 = 0.9246

Answer: There is a 92.5% probability that a batch of 20 items contains 2 or fewer defectives. A quality control team can use this to set acceptance thresholds for incoming shipments.

Calculating Binomial Probability in Excel, Python & R

For large n values, manual calculation becomes tedious. Every major statistical software package has built-in binomial functions that handle the arithmetic in one line.

Excel

=BINOM.DIST(k, n, p, FALSE)

' CDF — probability of k or fewer successes

=BINOM.DIST(k, n, p, TRUE)

' Example: P(X = 7) with n=10, p=0.7

=BINOM.DIST(7, 10, 0.7, FALSE) → 0.2668

Python (SciPy)

# PMF — exactly k successes

binom.pmf(7, n=10, p=0.7) # → 0.2668

# CDF — at most k successes

binom.cdf(7, n=10, p=0.7) # → 0.6172

# Mean and variance

binom.mean(n=10, p=0.7) # → 7.0

binom.var(n=10, p=0.7) # → 2.1

R

dbinom(7, size=10, prob=0.7) # → 0.2668

# CDF — at most k successes

pbinom(7, size=10, prob=0.7) # → 0.6172

# Generate a full PMF table

dbinom(0:10, size=10, prob=0.7)

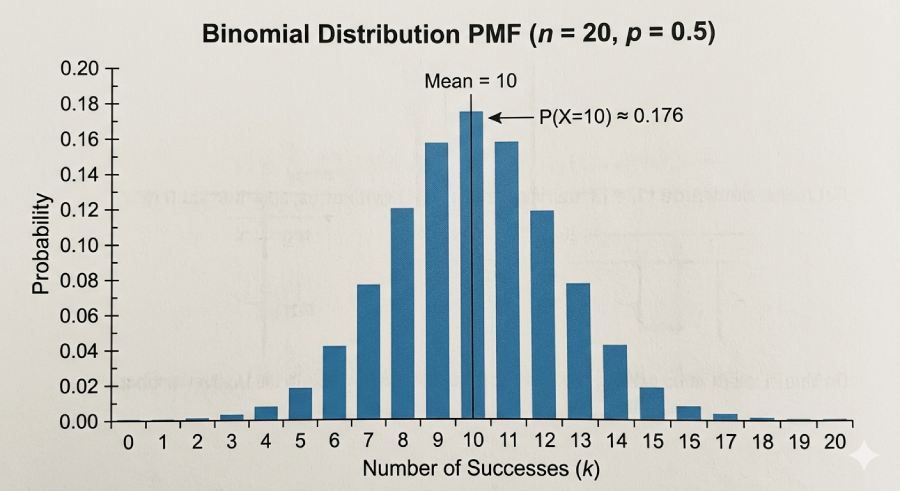

Properties and Shape of Binomial Distribution

The shape of a binomial distribution is determined entirely by n and p. Understanding how these parameters control the shape lets you visualize outcomes before calculating a single probability.

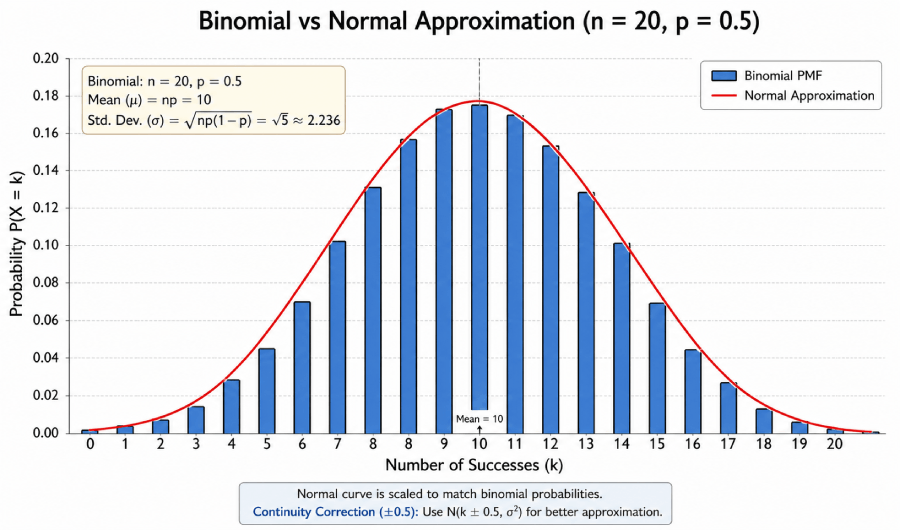

Symmetric binomial distribution (n = 20, p = 0.5). The peak occurs at the mean (np = 10), with equal spread on both sides.

| Parameter Combination | Shape | Skewness Direction | Typical Scenario |

|---|---|---|---|

| p = 0.5 | Symmetric, bell-like | None (zero skewness) | Fair coin flips |

| p < 0.5 | Right-skewed | Positive skew | Rare disease diagnosis, defects |

| p > 0.5 | Left-skewed | Negative skew | High-accuracy tests, drug response |

| Large n, any p | Approaches normal | Near zero | Large-sample quality control |

| Very small p, large n | Right-skewed, sparse | Strong positive | Manufacturing rare defects |

Right-skewed distribution (p = 0.2). Most outcomes cluster near 0, with a long tail to the right.

As n grows large, the binomial distribution smooths out and begins to look like a bell curve — this is the Central Limit Theorem in action. The bars of the probability distribution become narrower relative to the spread, concentrating around the mean.

Real-Life Applications of Binomial Distribution

The binomial model appears wherever outcomes are binary and trials repeat under controlled conditions. Below are five fields where it drives actual decisions.

Medicine & Clinical Trials

A drug has a 65% response rate. Out of 15 patients in a pilot study, what is the probability that at least 10 respond? Binomial distribution gives the exact probability needed for go/no-go decisions.

Quality Control

A factory accepts a shipment if fewer than 3 items in a sample of 50 are defective. With a known defect rate of 4%, binomial tells you the probability of passing — or failing — that inspection.

Finance & Risk

Credit analysts model loan defaults as independent Bernoulli trials. Binomial distribution estimates the probability that k of n borrowers in a portfolio default within a year.

Machine Learning & A/B Testing

In A/B testing, user conversions are binomial. With n visitors and a click-through rate p, binomial distribution backs the statistical significance tests used to declare a winner.

Sports Analytics

A baseball player with a .300 batting average has a 30% probability of a hit on each at-bat. In a 10-at-bat game, binomial distribution predicts the probability distribution of his hits that day.

Real-World Example

COVID-19 Vaccine Efficacy Trials

In Phase III vaccine trials, each participant is treated as an independent trial with two outcomes: infection or no infection. With tens of thousands of participants and a known background infection rate, binomial (and related) models compute the probability of observing the recorded number of infections under a null hypothesis of no vaccine effect. The resulting p-values drove the emergency authorization decisions that shaped global public health policy in 2020 and 2021.

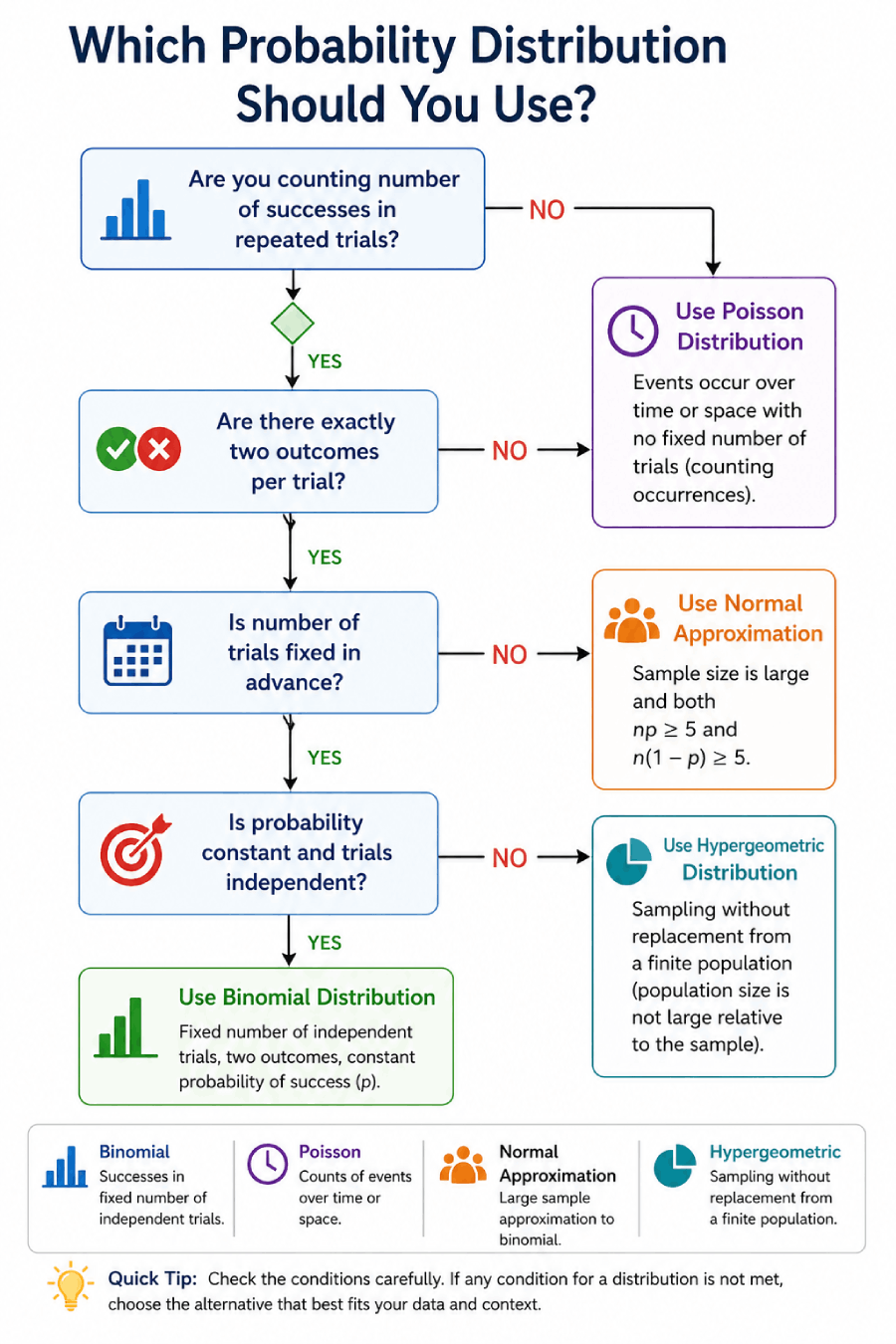

Binomial vs. Poisson vs. Normal Distribution

Decision flowchart for selecting between binomial, Poisson, normal, and hypergeometric distributions.

The three distributions most commonly confused with the binomial all occupy neighboring territory in probability theory. The table below separates them clearly. For deeper exploration, the normal distribution guide and the statistics and probability overview on Statistics Fundamentals cover the mathematical relationships in detail.

| Attribute | Binomial | Poisson | Normal |

|---|---|---|---|

| Type | Discrete | Discrete | Continuous |

| Parameters | n, p | λ (rate) | μ, σ |

| Number of trials | Fixed (n known) | Not fixed (or very large) | N/A |

| Possible values of X | 0, 1, 2, ..., n | 0, 1, 2, ... (no upper bound) | Any real number |

| Mean | np | λ | μ |

| Variance | np(1−p) | λ | σ² |

| Mean = Variance? | Only when p = 0 | Always (μ = σ²) | No relationship |

| Best used when | Fixed n, binary outcomes, constant p | Rare events, large n, small p, np = λ | Continuous data, large n (CLT) |

| Example | Defects in 50 units | Calls per hour at a call center | Heights, measurement errors |

Binomial to Poisson: The Limiting Relationship

As n → ∞ and p → 0, while the product λ = np stays constant, the binomial distribution converges to Poisson(λ). In practice, if n ≥ 20 and p ≤ 0.05 — or n ≥ 100 and np ≤ 10 — the Poisson approximation is accurate and far easier to compute. The Poisson distribution (Wikipedia) documents the full derivation of this limit.

Binomial to Normal: The Approximation Rule

Binomial distribution (bars) overlaid with its normal approximation (curve). The fit improves as n becomes larger and both np and n(1−p) are sufficiently large.

When both np ≥ 5 and n(1−p) ≥ 5, the binomial distribution is well-approximated by N(np, np(1−p)). Add a continuity correction of ±0.5 to account for the shift from discrete to continuous. This approximation is why the normal distribution is so central to large-sample binomial inference. You can verify critical values using the Z-table at Statistics Fundamentals.

If n is fixed and you are counting successes in binary trials → Binomial. If events are rare and n is effectively infinite → Poisson. If n is large enough that np ≥ 5 and n(1−p) ≥ 5 → approximate with Normal.

Common Mistakes When Using Binomial Distribution

These five errors appear repeatedly in statistics courses and applied work. Each one produces incorrect probabilities — sometimes dramatically so.

Sampling Without Replacement and Assuming Independence

Drawing 5 cards from a 52-card deck without replacement changes p after each draw. The trials are not independent. Use hypergeometric distribution instead, or binomial only when the sample is less than 10% of the population (the 10% rule).

Confusing P(X = k) with P(X ≤ k)

These are completely different quantities. P(X = 3) is the probability of exactly 3 successes. P(X ≤ 3) sums all probabilities from 0 to 3. Mixing them up produces answers that can be off by a factor of 3 or more.

Applying Binomial When p Is Not Constant

If a player's scoring probability changes depending on whether the game is home or away, p is not constant across trials. A weighted mixture of distributions, not a single binomial, models this correctly.

Forgetting to Verify All Four Conditions First

Many students jump straight to the formula without checking BINS. A single violated condition invalidates the model. The formula will still produce a number — but that number will be wrong.

Using Binomial When n Is Not Fixed

"Count calls until you get 3 complaints" — this has no fixed n. The number of trials is itself a random variable. This is a negative binomial (or Pascal distribution) problem, not binomial.

Summary: Binomial Distribution Quick Reference

| Property | Formula / Value | Notes |

|---|---|---|

| Notation | X ~ B(n, p) | n = trials, p = success probability |

| Support | k = 0, 1, 2, ..., n | Discrete — only whole numbers |

| PMF | C(n,k) × pk × (1−p)n−k | Probability of exactly k successes |

| CDF | Σ P(X=i) from i=0 to k | Probability of k or fewer successes |

| Mean | μ = np | Expected number of successes |

| Variance | σ² = np(1−p) | Also written npq where q = 1−p |

| Std. Deviation | σ = √(np(1−p)) | Square root of variance |

| Skewness | (1−2p) / √(np(1−p)) | Zero when p = 0.5 |

| Special case | n=1 → Bernoulli(p) | Single trial version |

| Poisson limit | n→∞, p→0, np=λ | Use when n≥20, p≤0.05 |

| Normal approx. | np≥5 and n(1−p)≥5 | Add continuity correction ±0.5 |

Related Distributions

Binomial distribution is part of a family of discrete distributions, each handling a slightly different version of the counting problem. For statistical tables used alongside these distributions, visit the statistical tables section on Statistics Fundamentals, which includes chi-square, t-distribution, and F-distribution tables for hypothesis testing workflows.

Bernoulli (n = 1)

- Single trial only

- X ∈ {0, 1}

- Mean = p

- Variance = p(1−p)

- Building block of binomial

Geometric

- Counts trials until first success

- n is not fixed — it is the outcome

- X ∈ {1, 2, 3, ...}

- Same independence and constant p conditions

Negative Binomial

- Counts trials until r-th success

- Generalizes geometric (r = 1)

- Used in overdispersed count data

- Mean = r/p; Variance = r(1−p)/p²

Hypergeometric

- Sampling without replacement

- Population is finite and known

- p changes after each draw

- Use when sample > 10% of population

For worked examples applying these distributions in hypothesis testing contexts, the hypothesis testing guide on Statistics Fundamentals covers the binomial test, proportion z-test, and chi-square goodness-of-fit test — all grounded in the principles here. The random variables section provides the formal expectation and variance derivations.

Read More Articles

Normal Distribution

Learn when and how binomial distribution approximates the normal curve.

Read More →Hypothesis Testing

Apply binomial probabilities in proportion tests and goodness-of-fit tests.

Read More →Random Variables

Understand the theoretical foundation of discrete probability distributions.

Read More →Frequently Asked Questions

Binomial distribution answers the question "how many successes will I get if I repeat the same yes/no trial n times?" Each trial is independent, has the same probability of success, and produces exactly one of two outcomes. The distribution gives you the probability for every possible count from 0 successes to n successes.

P(X = k) = C(n,k) × p^k × (1−p)^(n−k), where n is the number of trials, k is the number of successes you want to find the probability for, p is the probability of success on a single trial, and C(n,k) = n! / (k!(n−k)!) is the binomial coefficient counting the number of arrangements. This is the probability mass function (PMF) of the binomial distribution.

All four must hold simultaneously: (1) Fixed number of trials n — set in advance. (2) Independent trials — one result cannot influence another. (3) Binary outcomes — each trial ends in success or failure only. (4) Constant probability — p is identical on every trial. Use the BINS acronym (Binary, Independent, Number fixed, Same probability) to check each condition before applying the formula.

For X ~ B(n, p): the mean is μ = n × p (the expected number of successes), the variance is σ² = n × p × (1−p), and the standard deviation is σ = √(n × p × (1−p)). As a concrete example, for n = 20 and p = 0.4: mean = 8, variance = 4.8, standard deviation ≈ 2.19.

Binomial distribution requires a fixed, known number of trials n and a constant probability p. Poisson distribution models counts of rare events in a fixed time or space interval, where n is either unknown or very large and p is very small — defined only by the rate λ = np. Poisson is the limit of binomial as n → ∞ and p → 0 with np = λ constant. A rule of thumb: use Poisson when n ≥ 20 and p ≤ 0.05.

The normal approximation to binomial is accurate when both np ≥ 5 and n(1−p) ≥ 5. When these conditions are met, X ~ B(n, p) ≈ N(np, np(1−p)). Always apply a continuity correction of ±0.5 when computing probabilities: P(X ≤ k) with binomial becomes P(X ≤ k + 0.5) with normal. This approximation is particularly useful for large n where the exact binomial calculation requires summing many terms.

Binomial distribution is discrete. The random variable X can only take integer values: 0, 1, 2, ..., n. You cannot have 3.7 successes. This is why it has a probability mass function (PMF) rather than a probability density function (PDF). Its graph is a bar chart, not a smooth curve. The normal distribution, by contrast, is continuous and has a PDF.

In machine learning, binomial distribution underpins several core methods. Logistic regression outputs probabilities for binary classification — the likelihood function it maximizes is binomial. Naive Bayes classifiers use binomial (or Bernoulli) distributions for binary features. A/B testing of model versions uses binomial hypothesis tests to determine whether a difference in conversion rates is statistically significant. Cross-validation also relies on binomial models when measuring classification error rates across held-out folds.